AI

AI Product Design

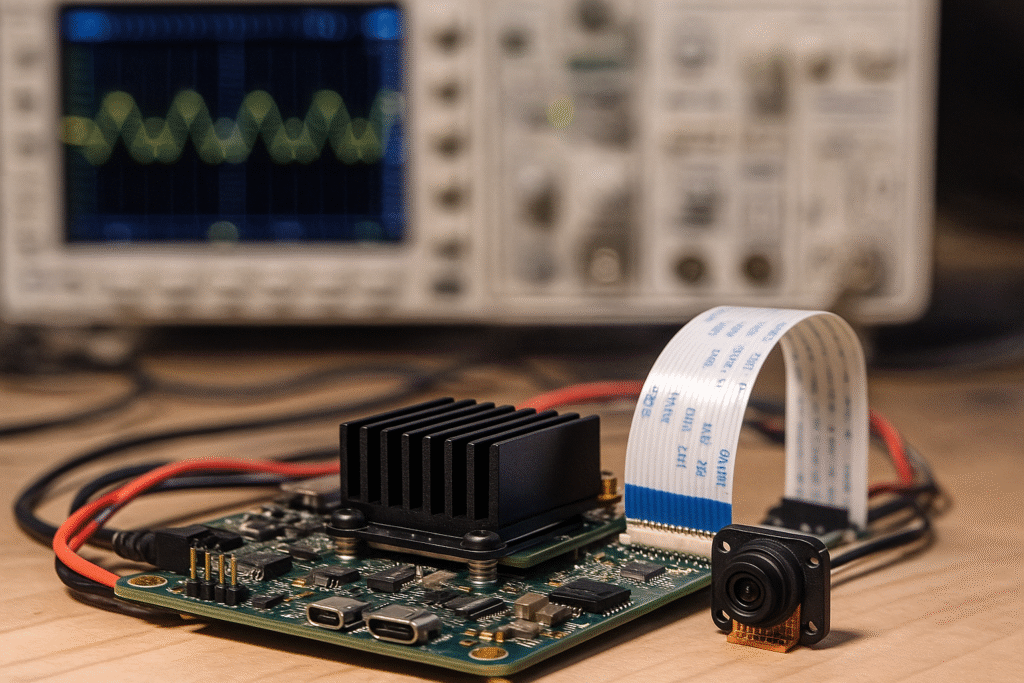

We add intelligence to products so they can see, listen, predict, and act—on the device (edge) or in the cloud. From choosing the right model to validating accuracy and safety, we design AI that fits your cost, power, and privacy needs—and actually ships.

What we build

On-device AI (Edge): tiny-ML for MCUs/MPUs, vision models on NPUs/GPUs, DSP pipelines

Cloud AI: scalable inference APIs, retraining pipelines, data labeling & drift monitoring

Human interface: voice wake/commands, gesture detection, anomaly alerts, smart UIs

Safety & governance: guardrails, bias checks, explainability, and audit logs

Typical use-cases

Quality inspection • Predictive maintenance • Presence/people counting • Gesture/voice control • Document/scanner intelligence • Power/energy optimization • Smart wearables & appliances

Built-in essentials

Accuracy you can trust: curated datasets, test/validation sets, confusion matrix targets

Latency & power: model compression (quantization, pruning), right silicon selection

Privacy & security: on-device processing when needed, encrypted data and model files

OTA & lifecycle: telemetry, A/B rollouts, automatic fallback/rollback

Deliverables

Model cards (accuracy, latency, memory, failure modes)

Edge firmware or runtime container with inference code

Data pipeline + retraining scripts; MLOps templates

Test harness, golden datasets, certification support (EMC/safety for hardware products)

Documentation: APIs, tuning knobs, deployment playbook

How we work

Discover & define: success metrics, constraints, and failure boundaries

Data & baseline: collect/label a starter set; train a quick baseline to prove feasibility

Engineer & optimize: select models/hardware; compress and tune for target latency/power

Validate: field trials; robustness testing (lighting, motion, noise, occlusion); guardrails

Deploy & improve: OTA rollout; monitor drift; scheduled retrains and model refresh

Edge vs cloud—how do we choose?

We balance latency, power, privacy, and connectivity; often a hybrid works best.

How accurate will it be?

We set target metrics up front and iterate with real data and corner-cases.

Can this run on a small MCU?

Yes—tiny-ML is viable for many tasks using quantized models and efficient feature extraction.